Someone has pointed out it would be lot easier for AI to replace a CEO then a developer.

AI won’t code everything by next year, blnot inn5 years either as it requires understanding context and actual reasoning which AI doesn’t have and won’t have for a long time to come, but the day that AI can code itself is the day that humanity is done for

I installed Manjaro on one of my computers and I wanted to see, if ChatGPT, Claude, Gemini or Mistral where useful in eliminating some quirks you always encounter after a fresh install. So I asked them whenever I stumbled upon an issue how I could solve that issue.

None, I’d like to emphasise this: NONE of their tips was helpful.

And Mr Masad wants me to believe AIs would be able to program whole applications within a year?

MUAHAHAHA

ok then.

all developers should quit their jobs now. like right now. also, don’t bother with foss anymore. AI can generate it all by itself. all foss projects should close all their public repos entirely. no more public code repos, no more software development.

everybody go home, AI has it covered. we can just get new more fulfilling jobs in farming, logging, or construction.

bye greedy AI fuck-sticks. We’ll be back when you assholes snap your AI neck trying to suck your own dicks.

Who is this guy? Some CEO ? Isn’t it more cost effective to replace him with ai?

What’s weird is he’s the ceo of replit.

Replit’s product is a website where you can write a snippet of code and run it without having to install anything. An activity that human developers would do to test out something.

So if his prediction comes true, his product will lose all value.

Maybe his expectation is that companies will buy his product because people will have to feed the AI-generated code into it to test it, instead of having humans manually review everything. Basically telling people to create a problem so he can sell his solution.

There is no AI products to replace CEOs, currently?

Literally any chatbot, probably

We will find out just how good AI is with coding in a few months when it replaces the SSA’s COBOL.

My guess? Catastrophic.

And instead of going back, they’ll say some kid deleted the old stuff after copying it into the new system.

Of course they will delete the old system entirely. Saving it would just be wasteful, and intelligent.

Lol, yeah. Keep paying us developers to write that philosopher stone. For writing general AI my rate is 100x because it’s magic you can’t understand without being able to write code.

Listening to this guy talk shit is a waste of time

We are all so fucked.

As a coder, the majority of my job isn’t writing code. It’s translating the bullshit management says and the broken specs we’re given into what they both actually want, not what they said. There is never going to be an AI that fixes that

As a developer, I literally laughed hard enough to choke a little.

I actually dare them to try. I’m really looking forward to the massive paychecks I’m going to get when companies are panicking to try to untangle all the absolute nonsense bullshit these AI companies are about to unleash into corporate codebases. The AI-slop bugfest will make the Y2K issue seem trivial. I’m so excited, the future looks very bright for human software developers.

My advice: Practice going over other people’s code with a fine-tooth comb looking for bad architecture, flaws and inefficiencies. You won’t always be right, you won’t find them all, but you’ll learn lots of skills you’ll need in the future. Whatever you do, don’t undersell yourselves, remember that your experience is valuable, and AI has no experience, it just has a huge library it can shotgun “solutions” out of. Half the time they don’t even compile, nevermind work properly, or efficiently.

My advice: Practice going over other people’s code with a fine-tooth comb looking for bad architecture, flaws and inefficiencies.

I agree. Funny story, I wasn’t allowed to do code reviews at my current job for about 2 years because they thought my comb was too fine. Suddenly software quality is something they are really valuing and they’re allowing me to do code reviews again. Funny, that.

AI is probably going to transform how code is written, but I don’t think AI will fully replace programmers. At least not in the foreseeable future.

Most of a programmer’s work is maintaining existing code. This is something current AI models still struggle with.

Coding is totally obselete, bro. AI can totally write all the code, trust me bro. You just gotta know how to tell it what code to write, like learn some keywords and stuff, bro. Like, as long as you check how it produces looping mechanisms and tell it when it should use polymorphism and stuff, it’ll totally do all the work bro. You don’t need to know how to code, just the right sequence of keywords and commands so the AI can write all the code.

Waiting for AI to take over CEO positions because they do nothing and you can replace them with a series of shell scripts.

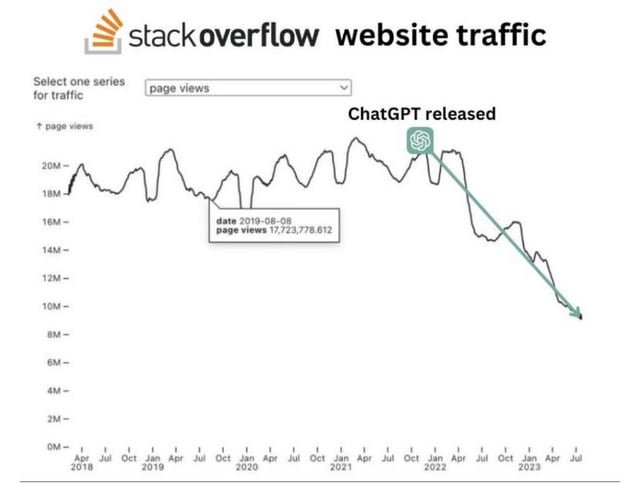

The idea of LLMs putting coders out of work at a large scale seems inherently self-defeating.

The LLMs needed to ingest a massive volume of code to get to their current level of proficiency. What will happen if they put all the coders out of work and Stack Overflow is down to just a small number of hobbyists? Will the LLMs just stop advancing?

I’m sure Sam Altman would say they are just about to have reasoning capabilities that will allow them to improve. But Sam Altman is not credible.

It’s sadly already happening in regards to stack.

Outdated and no source…